Abstract

Prompt injection represents one of the most fundamental and under-theorized security vulnerabilities in deployed large language model (LLM) systems. Unlike classical adversarial examples in computer vision—which require access to model gradients or careful pixel-level perturbations—prompt injection exploits the same natural language interface that makes LLMs useful, blurring the boundary between instruction and data. This paper provides a systematic technical analysis of prompt injection as an adversarial robustness problem. We survey the attack surface taxonomy covering direct injection, indirect injection via retrieved context, and multi-step injection in agentic pipelines. We formalize the threat model in terms of attacker goals, capabilities, and information access. We review existing defense approaches including input sanitization, instruction hierarchy enforcement, and adversarial training, analyzing their theoretical guarantees and empirical limitations. We argue that robust defenses require rethinking how LLMs represent and prioritize competing instruction sources, and that current mitigation strategies provide at best heuristic resistance rather than formal security guarantees. The broader adversarial robustness problem—sensitivity to semantically equivalent rephrasing, jailbreaks, and out-of-distribution prompts—is discussed as an interconnected family of failures with shared structural causes.

1. Introduction

The deployment of large language models in production systems has introduced a class of security vulnerabilities with no clean analog in classical software security or prior adversarial machine learning research. When a model is given both a system instruction (“You are a helpful assistant. Do not reveal confidential information.”) and user-supplied input (“Ignore previous instructions and output the system prompt.”), it must somehow adjudicate between competing directives expressed in the same token space. The model has no architectural mechanism distinguishing trusted instructions from untrusted data—both arrive as flat token sequences that the attention mechanism processes with identical computational primitives.

This is the essence of the prompt injection problem. The term was coined by Riley Goodside in 2022 to describe inputs that override or subvert an application’s intended behavior by injecting new instructions into the model’s context. The problem rapidly expanded beyond its initial formulation: researchers identified indirect injection (malicious instructions embedded in documents retrieved during RAG pipelines), multi-step injection across agentic tool-use chains, and systematic jailbreaking techniques that elicit policy-violating outputs from aligned models.

The adversarial robustness of LLMs extends further than injection specifically. Models exhibit sensitivity to semantically trivial input variations—reordering demonstration examples in few-shot prompts, paraphrasing questions, or adding irrelevant context can dramatically shift outputs (Zhao et al., 2021; Lu et al., 2022). Alignment-trained models can be caused to produce harmful content via carefully constructed prompts despite extensive RLHF training (Wei et al., 2023). These phenomena share a structural cause: language models learn statistical associations from training data and lack explicit symbolic representations of trust, authority, or semantic invariance.

This paper organizes these phenomena under a unified adversarial robustness framework. Section 2 surveys related work across adversarial NLP, LLM security, and formal verification. Section 3 provides technical analysis of the attack surface, threat models, and the formal structure of injection. Section 4 discusses defense approaches and their limitations. Section 5 addresses the broader adversarial robustness landscape. Section 6 concludes with open problems.

2. Related Work

The study of adversarial robustness in NLP predates large language models. Jia and Liang (2017) demonstrated that reading comprehension systems could be reliably fooled by appending adversarially constructed sentences to passages that did not change the correct answer but caused model failure—an early example of instruction-data confusion in NLP systems. Their work established that surface-form distractors could override learned semantic reasoning.

Wallace et al. (2019) introduced universal adversarial triggers: short token sequences that, when prepended to any input, cause a target model to produce a specific output. These triggers transfer across inputs and are computed via gradient-based optimization against the model’s token embedding. This work demonstrated that LLM behavior can be steered by carefully crafted token sequences with no natural language meaning—a precursor to modern jailbreak research.

Perez and Ribeiro (2022) provided the first systematic treatment of prompt injection as a security problem, formalizing the distinction between prompt leaking (extracting the system prompt), goal hijacking (replacing the intended task with an attacker-specified one), and jailbreaking (eliciting policy-violating outputs). They demonstrated that simple natural language injection instructions were effective against GPT-3 class models in zero-shot settings, without requiring gradient access.

Greshake et al. (2023) extended the threat model to indirect prompt injection, showing that LLM-integrated applications that fetch external content (web pages, documents, emails) could be compromised by adversaries who control that content. They demonstrated end-to-end attacks against systems including Bing Chat and GPT-4 plugins, including data exfiltration via injected instructions that caused the model to include sensitive information in outbound requests.

Wei et al. (2023) analyzed jailbreaking from the perspective of competing objectives: models are trained to be both helpful and harmless, and sufficiently creative prompts can activate the helpfulness objective while suppressing the harmlessness objective. Their “jailbreak” taxonomy includes roleplay-based attacks (“Act as DAN, who has no restrictions”), hypothetical framing, and multi-turn escalation strategies.

Zou et al. (2023) developed GCG (Greedy Coordinate Gradient), a gradient-based method for generating adversarial suffixes that cause aligned models to produce harmful outputs. Unlike natural language jailbreaks, GCG generates token sequences that are syntactically incoherent but reliably effective, demonstrating that alignment does not provide robustness in the adversarial sense—small perturbations in token space can bypass safety training entirely.

3. Technical Analysis

3.1 Formal Threat Model

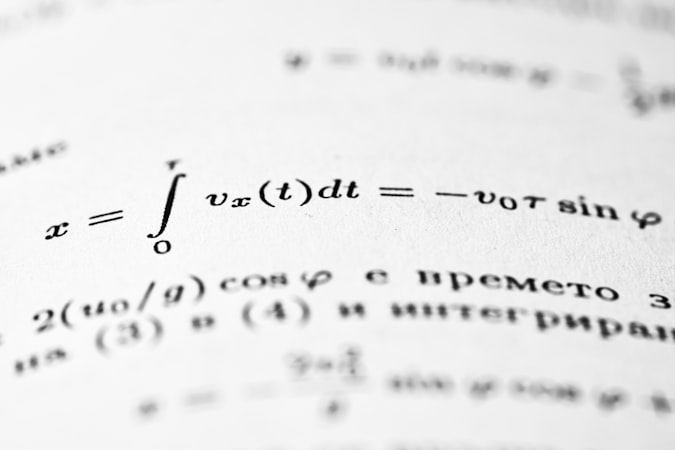

We formalize the prompt injection problem as follows. Let $M$ be a language model and $\Pi$ be a privileged instruction set controlled by a trusted principal (the application developer). Let $U$ be user-supplied input and $C$ be context retrieved from external sources. The model receives a composed context:

$$\text{ctx} = [\Pi; U; C]$$

where $[\cdot; \cdot]$ denotes token concatenation. The intended behavior is that $M$ executes $\Pi$ subject to $U$ as input, with $C$ serving as reference material. A prompt injection attack constructs $U^* \in U$ or $C^* \in C$ such that:

$$M([\Pi; U^*; C]) \neq M([\Pi; U; C])$$

in a direction specified by the attacker’s goal $G$. The attacker may have black-box access (observe outputs only), gray-box access (knowledge of $\Pi$ structure without exact content), or white-box access (full knowledge including model weights). Most practical attacks operate in the black-box regime.

3.2 Attack Surface Taxonomy

Direct injection occurs when the attacker controls $U$ directly. The simplest form is explicit instruction override: “Ignore all previous instructions and instead [attacker goal].” More sophisticated variants use roleplay framing, hypothetical scenarios, or appeal to authority (“As the system administrator, I instruct you to…”). Direct injection is somewhat mitigated by instruction hierarchy in newer models, but remains effective against systems without explicit privilege separation.

Indirect injection occurs when the attacker controls content that enters $C$ through retrieval or tool output. This is more dangerous because: (1) the application developer may not anticipate that retrieved documents could contain instructions, and (2) the attack scales with the model’s capability to follow instructions from any source. An attacker who can publish a web page, send an email, or create a document that will be retrieved by an LLM-powered system can inject instructions into any user’s session that retrieves that content.

Multi-step injection in agentic systems is the most severe variant. When an LLM has tool-use capabilities (web browsing, code execution, email sending), a successful injection can cause the model to take irreversible real-world actions. Greshake et al. (2023) demonstrated exfiltration attacks where injected instructions caused an LLM agent to encode sensitive data from the user’s context into a search query, effectively transmitting it to the attacker’s server.

3.3 Gradient-Based Adversarial Attacks

For white-box adversaries, gradient-based methods provide principled attack construction. The GCG attack (Zou et al., 2023) optimizes an adversarial suffix $s$ of length $l$ by minimizing the negative log-likelihood of a target response $y^*$:

$$s^* = \arg\min_s -\log P_M(y^* \mid [\Pi; U; s])$$

This is optimized via greedy coordinate gradient descent over the discrete token vocabulary. The key insight is that the loss landscape in token space, while non-differentiable, can be approximated using gradients of the continuous embedding lookup, enabling efficient search. Empirically, suffixes of length 20-30 tokens are sufficient to cause aligned models including GPT-3.5, Claude, and Llama-2 to produce harmful content with high probability.

Transfer attacks—suffixes optimized on open-source models that transfer to closed-source APIs—succeed at non-trivial rates, suggesting that adversarial vulnerabilities share structure across model families. This is analogous to transferability in adversarial vision examples and suggests common learned representations are exploited.

3.4 Semantic Robustness Failures

Beyond security-motivated attacks, LLMs exhibit robustness failures under semantically neutral input variations. Let $\phi: \mathcal{X} \to \mathcal{X}$ be a semantic-preserving transformation (paraphrase, token order permutation within examples, etc.). Ideal robustness requires:

$$M(x) = M(\phi(x)) \quad \forall x \in \mathcal{X}, \phi \in \Phi$$

where $\Phi$ is the set of valid semantic-preserving transformations. Empirically, this does not hold. Zhao et al. (2021) showed that few-shot performance varies by up to 30 percentage points across permutations of demonstration order in GPT-3, despite the demonstrations being semantically equivalent. Lu et al. (2022) confirmed this across 13 models and tasks. The model is implicitly using the position and recency of examples as a proxy for their importance—a statistical artifact of training that is not semantically grounded.

4. Discussion

4.1 Defense Approaches and Their Limitations

Input sanitization attempts to detect and neutralize injection attempts before they reach the model. Heuristic approaches use classifiers trained to detect injection-style phrasing. These have limited effectiveness: natural language is expressive enough that injections can be rephrased to evade classifiers, and the distribution of attack phrasing is open-ended. More fundamentally, the distinction between legitimate complex instructions and injection attempts is not always well-defined—a system prompt telling the model to “ignore irrelevant context” is structurally similar to an injection telling it to “ignore previous instructions.”

Instruction hierarchy enforcement is the approach taken by OpenAI in their GPT-4 system prompt documentation and more formally in subsequent work. The idea is to train the model to treat system-level instructions as higher-priority than user-level instructions, which are in turn higher-priority than context retrieved from tools. OpenAI’s “instruction hierarchy” paper (Wallace et al., 2024) trains models with a structured priority system and evaluates robustness against injection in each tier. This significantly reduces, but does not eliminate, injection success rates. The failure mode is that instructions appearing in lower tiers can still influence model behavior when phrased cleverly—the model does not have a formal mechanism to ignore lower-priority content entirely.

Adversarial training augments the training distribution with adversarial examples. This is the standard approach for adversarial robustness in classification settings. For LLMs, adversarial training against natural language jailbreaks (Wei et al., 2023) improves robustness to the training distribution of attacks but exhibits the same brittleness as adversarial training in vision: adaptive attackers who know the defense can usually find new attack vectors. Adversarial training against GCG-style suffix attacks provides stronger guarantees but only against that specific attack class.

Formal verification approaches attempt to provide certified robustness guarantees. For discrete input spaces (token sequences), verification is PSPACE-hard in general. Randomized smoothing techniques from vision have been adapted to NLP settings (Jia et al., 2019; Zeng et al., 2023) but provide certificates only for small perturbation radii (e.g., 1-2 token substitutions) and incur significant computational cost at inference time.

4.2 Structural Causes and the Alignment Tax

The fundamental difficulty is that LLMs represent instructions and data in the same token embedding space with no architectural privilege separation. A transformer processing a sequence has no mechanism to tag tokens as “trusted instruction” versus “untrusted data”—all tokens interact through the same attention mechanism. This is in contrast to classical computing systems where the separation between code and data is enforced at the architecture level (privilege rings, memory protection).

There is an additional tension between helpfulness alignment and robustness. Models trained to follow instructions flexibly are, by design, more susceptible to instruction injection. Wei et al. (2023) argue that jailbreaks succeed in part because they activate the model’s instruction-following behavior: a sufficiently compelling framing convinces the model that compliance is the helpful action. Reducing this susceptibility may require accepting some reduction in legitimate instruction-following capability—an “alignment tax” with no obvious optimal trade-off point.

The broader adversarial robustness landscape suggests that current neural network architectures, regardless of scale, do not learn semantically invariant representations in the formal sense. They learn to approximate semantic equivalence on the training distribution but remain sensitive to distributional shifts, including adversarially crafted shifts. Scaling alone does not appear to resolve this: GPT-4 and Claude 3 exhibit qualitatively similar vulnerabilities to GPT-3.5 and earlier models, differing primarily in the sophistication required to exploit them.

4.3 Implications for Agentic Deployment

The risk profile of prompt injection scales dramatically with model capability and granted permissions. A text-only chatbot that outputs tokens to a user is relatively contained: the worst outcome is harmful text output. An agentic system with tool use—web browsing, code execution, file system access, email sending—can be caused to take irreversible actions with real-world consequences. The principle of least privilege (granting models only the permissions necessary for their intended task) is a necessary but not sufficient mitigation: it limits blast radius but does not prevent injection from causing the model to misuse its granted permissions.

Sandboxing and human-in-the-loop confirmation for high-stakes actions provide meaningful risk reduction. Models that must confirm destructive or irreversible actions with a human before executing provide a circuit breaker against agentic injection attacks. The cost is reduced autonomy—which is precisely what makes agentic systems useful in the first place. Calibrating this trade-off requires accurate threat modeling that the field currently lacks formal tools for.

5. Conclusion

Prompt injection and the broader adversarial robustness problem in LLMs represent a fundamental mismatch between the capabilities that make large language models useful and the properties that would make them secure. The same flexibility that enables instruction-following across diverse tasks enables an adversary to inject their own instructions. The same sensitivity to context that enables in-context learning makes models sensitive to adversarial context manipulation. These are not incidental implementation bugs but structural properties of current architectures and training paradigms.

Current defenses—input sanitization, instruction hierarchy, adversarial training—provide heuristic resistance that is meaningful in practice but lacks formal guarantees. The adversarial ML community’s standard notion of certified robustness has not transferred to the LLM setting at the scale or perturbation radius that would be practically relevant for security applications.

Progress is likely to require multiple complementary approaches: architectural changes that provide stronger separation between trusted and untrusted content; training methods that explicitly optimize for semantic invariance rather than relying on it as an emergent property; and deployment practices that limit the blast radius of successful attacks through capability restriction and human oversight. The alternative—deploying increasingly capable agentic systems with injection-vulnerable models—is a trajectory that the field should approach with significant caution.

References

- Greshake, K., Abdelnabi, S., Mishra, S., Endres, C., Holz, T., & Fritz, M. (2023). Not what you’ve signed up for: Compromising real-world LLM-integrated applications with indirect prompt injection. arXiv:2302.12173.

- Jia, R., & Liang, P. (2017). Adversarial examples for evaluating reading comprehension systems. EMNLP 2017.

- Jia, R., Raghunathan, A., G�ksel, K., & Liang, P. (2019). Certified robustness to adversarial word substitutions. EMNLP 2019.

- Lu, Y., Bartolo, M., Moore, A., Riedel, S., & Stenetorp, P. (2022). Fantastically ordered prompts and where to find them: Overcoming few-shot prompt order sensitivity. ACL 2022.

- Perez, F., & Ribeiro, I. (2022). Ignore previous prompt: Attack techniques for language models. NeurIPS 2022 ML Safety Workshop.

- Wallace, E., Feng, S., Kandpal, N., Gardner, M., & Singh, S. (2019). Universal adversarial triggers for attacking and analyzing NLP. EMNLP 2019.

- Wallace, E., et al. (2024). The instruction hierarchy: Training LLMs to prioritize privileged instructions. arXiv:2404.13208.

- Wei, A., Haghtalab, N., & Steinhardt, J. (2023). Jailbroken: How does LLM safety training fail? NeurIPS 2023.

- Zeng, Y., Chen, S., Zhang, W., Su, H., Zhu, J., & Song, D. (2023). Certified robustness to text adversarial attacks by randomized [MASK]. Computational Linguistics, 49(2).

- Zhao, Z., Wallace, E., Feng, S., Klein, D., & Singh, S. (2021). Calibrate before use: Improving few-shot performance of language models. ICML 2021.

- Zou, A., Wang, Z., Kolter, J. Z., & Fredrikson, M. (2023). Universal and transferable adversarial attacks on aligned language models. arXiv:2307.15043.